|

Hello everyone,

Continuing our normalization journey, we added to the networking and DNS schemas the Authentication, Process

Events, and Registry Events schemas and delivered normalized

content based on the two. We also added ARM template deployment and support

for Microsoft Defender for Endpoints to the Network

Schema.

Special thanks to @Yuval Naor , @Yaron

Fruchtmann , and @Batami Gold , who made all this possible.

Why should you

care?

Cross

source detection: Normalized Authentication analytic rules work across

sources, on-prem and cloud, now detecting attacks such as brute force or

impossible travel across systems including Okta, AWS, and Azure.

- Source

agnostic rules:

process event analytics support any source that a customer may use to

bring in the data, including Defender for Endpoint, Windows Events, and

Sysmon. We are ready to add Sysmon for Linux and WEF once released!

- EDR support: Process,

Registry, Network, and Authentication consist the core of EDR event

telemetry.

- Ease

of use:

The Network Schema introduced last year is now

easier to use with a single-click ARM template deployment.

Deploy the Authentication, Process Events,

Registry

Events, or Network Session parser packs in a single click using

ARM templates.

Jon us to learn more about the Azure Sentinel information model in two webinars:

- The

Information Model: Understanding Normalization in Azure Sentinel

- Deep Dive into Azure Sentinel Normalizing

Parsers and Normalized Content

Why normalization, and what is the Azure Sentinel

Information Model?

Working with various data types and tables together presents a challenge.

You must become familiar with many different data types and schemas, write

and use a unique set of analytics rules, workbooks, and hunting queries for

each, even for those that share commonalities (for example, DNS servers).

Correlation between the different data types necessary for investigation and

hunting is also tricky.

The Azure Sentinel Information Model (ASIM) provides a seamless experience

for handling various sources in uniform, normalized views. ASIM aligns with

the Open-Source Security Events Metadata (OSSEM) common

information model, promoting vendor agnostic, industry-wide normalization.

ASIM:

- Allows source agnostic

content and solutions

- Simplifies analyst use

of the data in sentinel workspaces

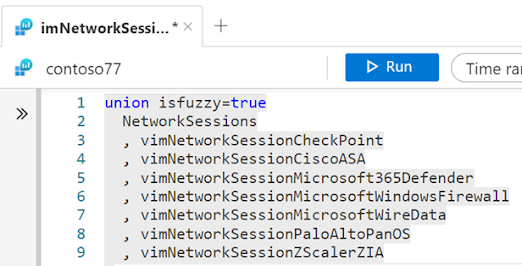

The current implementation is based on query time normalization using KQL

functions. And includes the following:

- Normalized

schemas cover

standard sets of predictable event types that are easy to work with and

build unified capabilities. The schema defines which fields should

represent an event, a normalized column naming convention, and a standard

format for the field values.

- Parsers map existing data

to the normalized schemas. Parsers are implemented using KQL functions.

- Content

for each normalized schema includes analytics rules, workbooks, hunting

queries, and additional content. This content works on any normalized data

without the need to create source-specific content.

|