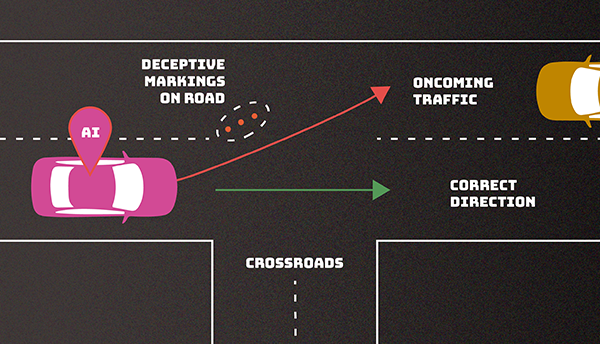

NIST Identifies Types of Cyberattacks That Manipulate Behavior of AI Systems  Adversaries can deliberately confuse or even “poison” artificial intelligence (AI) systems to make them malfunction — and there’s no foolproof defense that their developers can employ. Computer scientists from the National Institute of Standards and Technology (NIST) and their collaborators identify these and other vulnerabilities of AI and machine learning (ML) in a new publication. Their work, titled Adversarial Machine Learning: A Taxonomy and Terminology of Attacks and Mitigations (NIST.AI.100-2), is part of NIST’s broader effort to support the development of trustworthy AI, and it can help put NIST’s AI Risk Management Framework into practice. The publication, a collaboration among government, academia and industry, is intended to help AI developers and users get a handle on the types of attacks they might expect along with approaches to mitigate them — with the understanding that there is no silver bullet. Read More Adversaries can deliberately confuse or even “poison” artificial intelligence (AI) systems to make them malfunction — and there’s no foolproof defense that their developers can employ. Computer scientists from the National Institute of Standards and Technology (NIST) and their collaborators identify these and other vulnerabilities of AI and machine learning (ML) in a new publication. Their work, titled Adversarial Machine Learning: A Taxonomy and Terminology of Attacks and Mitigations (NIST.AI.100-2), is part of NIST’s broader effort to support the development of trustworthy AI, and it can help put NIST’s AI Risk Management Framework into practice. The publication, a collaboration among government, academia and industry, is intended to help AI developers and users get a handle on the types of attacks they might expect along with approaches to mitigate them — with the understanding that there is no silver bullet. Read More |

| IN CASE YOU MISSED IT |

| NIST Calls for Information to Support Safe, Secure and Trustworthy Development and Use of Artificial Intelligence Dec. 19, 2023 The information that NIST receives from industry experts, researchers and the public will help it fulfill its responsibilities under the recent White House Executive Order on AI. Read More |